Spark Python API函数学习.docx

Spark Python API函数学习.docx

- 文档编号:10195251

- 上传时间:2023-02-09

- 格式:DOCX

- 页数:46

- 大小:25.82KB

Spark Python API函数学习.docx

《Spark Python API函数学习.docx》由会员分享,可在线阅读,更多相关《Spark Python API函数学习.docx(46页珍藏版)》请在冰豆网上搜索。

SparkPythonAPI函数学习

SparkPythonAPI函数学习

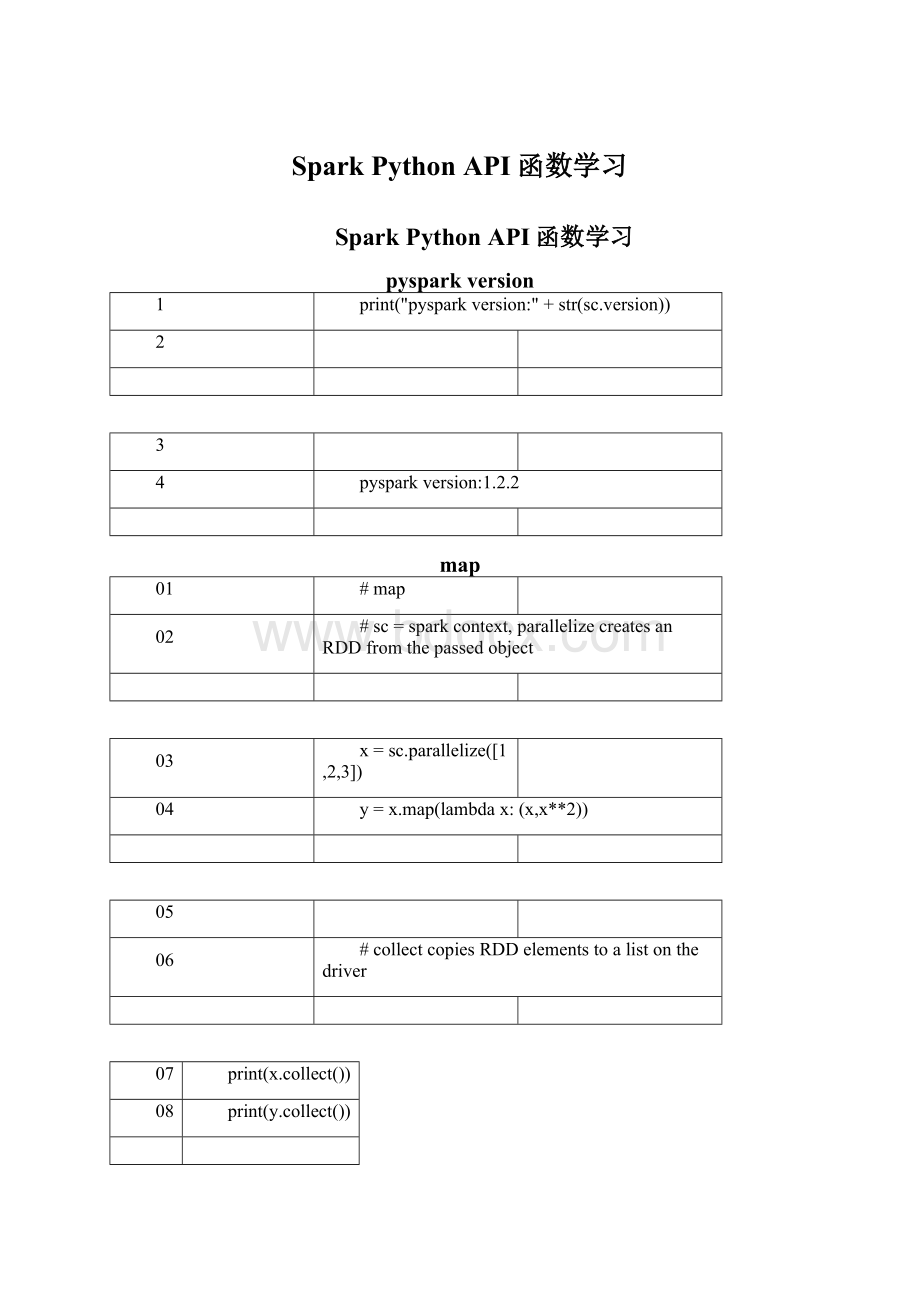

pysparkversion

1

print("pysparkversion:

" + str(sc.version))

2

3

4

pysparkversion:

1.2.2

map

01

#map

02

#sc=sparkcontext,parallelizecreatesanRDDfromthepassedobject

03

x = sc.parallelize([1,2,3])

04

y = x.map(lambda x:

(x,x**2))

05

06

#collectcopiesRDDelementstoalistonthedriver

07

print(x.collect())

08

print(y.collect())

09

10

[1, 2, 3]

11

[(1, 1),(2, 4),(3, 9)]

flatMap

1

#flatMap

2

x = sc.parallelize([1,2,3])

3

y = x.flatMap(lambda x:

(x, 100*x,x**2))

4

print(x.collect())

5

print(y.collect())

6

7

[1, 2, 3]

8

[1, 100, 1, 2, 200, 4, 3, 300, 9]

mapPartitions

01

#mapPartitions

02

x = sc.parallelize([1,2,3], 2)

03

def f(iterator):

yield sum(iterator)

04

y = x.mapPartitions(f)

05

#glom()flattenselementsonthesamepartition

06

print(x.glom().collect())

07

print(y.glom().collect())

08

09

[[1],[2, 3]]

10

[[1],[5]]

mapPartitionsWithIndex

01

#mapPartitionsWithIndex

02

x = sc.parallelize([1,2,3], 2)

03

def f(partitionIndex,iterator):

yield (partitionIndex,sum(iterator))

04

y = x.mapPartitionsWithIndex(f)

05

06

#glom()flattenselementsonthesamepartition

07

print(x.glom().collect())

08

print(y.glom().collect())

09

10

[[1],[2, 3]]

11

[[(0, 1)],[(1, 5)]]

getNumPartitions

1

#getNumPartitions

2

x = sc.parallelize([1,2,3], 2)

3

y = x.getNumPartitions()

4

print(x.glom().collect())

5

print(y)

6

7

[[1],[2, 3]]

8

2

filter

1

#filter

2

x = sc.parallelize([1,2,3])

3

y = x.filter(lambda x:

x%2 == 1) #filtersoutevenelements

4

print(x.collect())

5

print(y.collect())

6

7

[1, 2, 3]

8

[1, 3]

distinct

1

#distinct

2

x = sc.parallelize(['A','A','B'])

3

y = x.distinct()

4

print(x.collect())

5

print(y.collect())

6

7

['A', 'A', 'B']

8

['A', 'B']

sample

01

#sample

02

x = sc.parallelize(range(7))

03

#call'sample'5times

04

ylist = [x.sample(withReplacement=False,fraction=0.5) for i inrange(5)]

05

print('x=' + str(x.collect()))

06

for cnt,y in zip(range(len(ylist)),ylist):

07

print('sample:

' + str(cnt) + 'y=' + str(y.collect()))

08

09

x = [0, 1, 2, 3, 4, 5, 6]

10

sample:

0 y = [0, 2, 5, 6]

11

sample:

1 y = [2, 6]

12

sample:

2 y = [0, 4, 5, 6]

13

sample:

3 y = [0, 2, 6]

14

sample:

4 y = [0, 3, 4]

takeSample

01

#takeSample

02

x = sc.parallelize(range(7))

03

#call'sample'5times

04

ylist = [x.takeSample(withReplacement=False,num=3) for i inrange(5)]

05

print('x=' + str(x.collect()))

06

for cnt,y in zip(range(len(ylist)),ylist):

07

print('sample:

' + str(cnt) + 'y=' + str(y)) #nocollectony

08

09

x = [0, 1, 2, 3, 4, 5, 6]

10

sample:

0 y = [0, 2, 6]

11

sample:

1 y = [6, 4, 2]

12

sample:

2 y = [2, 0, 4]

13

sample:

3 y = [5, 4, 1]

14

sample:

4 y = [3, 1, 4]

union

01

#union

02

x = sc.parallelize(['A','A','B'])

03

y = sc.parallelize(['D','C','A'])

04

z = x.union(y)

05

print(x.collect())

06

print(y.collect())

07

print(z.collect())

08

09

['A', 'A', 'B']

10

['D', 'C', 'A']

11

['A', 'A', 'B', 'D', 'C', 'A']

intersection

01

#intersection

02

x = sc.parallelize(['A','A','B'])

03

y = sc.parallelize(['A','C','D'])

04

z = x.intersection(y)

05

print(x.collect())

06

print(y.collect())

07

print(z.collect())

08

09

['A', 'A', 'B']

10

['A', 'C', 'D']

11

['A']

sortByKey

1

#sortByKey

2

x = sc.parallelize([('B',1),('A',2),('C',3)])

3

y = x.sortByKey()

4

print(x.collect())

5

print(y.collect())

6

7

[('B', 1),('A', 2),('C', 3)]

8

[('A', 2),('B', 1),('C', 3)]

sortBy

1

#sortBy

2

x = sc.parallelize(['Cat','Apple','Bat'])

3

def keyGen(val):

return val[0]

4

y = x.sortBy(keyGen)

5

print(y.collect())

6

7

['Apple', 'Bat', 'Cat']

glom

1

#glom

2

x = sc.parallelize(['C','B','A'], 2)

3

y = x.glom()

4

print(x.collect())

5

print(y.collect())

6

7

['C', 'B', 'A']

8

[['C'],['B', 'A']]

cartesian

01

#cartesian

02

x = sc.parallelize(['A','B'])

03

y = sc.parallelize(['C','D'])

04

z = x.cartesian(y)

05

print(x.collect())

06

print(y.collect())

07

print(z.collect())

08

09

['A', 'B']

10

['C', 'D']

11

[('A', 'C'),('A', 'D'),('B', 'C'),('B', 'D')]

groupBy

1

#groupBy

2

x = sc.parallelize([1,2,3])

3

y = x.groupBy(lambda x:

'A' if (x%2 == 1) else 'B' )

4

print(x.collect())

5

#yisnested,thisiteratesthroughit

6

print([(j[0],[i for i in j[1]]) for j iny.collect()])

7

8

[1, 2, 3]

9

[('A',[1, 3]),('B',[2])]

pipe

1

#pipe

2

x = sc.parallelize(['A', 'Ba', 'C', 'AD'])

3

y =x.pipe('grep-i"A"') #callsouttogrep,mayfailunderWindows

4

print(x.collect())

5

print(y.collect())

6

7

['A', 'Ba', 'C', 'AD']

8

['A', 'Ba', 'AD']

foreach

01

#foreach

02

from __future__ import print_function

03

x = sc.parallelize([1,2,3])

04

def f(el):

05

'''sideeffect:

appendthecurrentRDDelementstoafile'''

06

f1=open("./foreachExample.txt", 'a+')

07

print(el,file=f1)

08

09

#firstclearthefilecontents

10

open('./foreachExample.txt', 'w').close()

11

12

y = x.foreach(f) #writesintoforeachExample.txt

13

14

print(x.collect())

15

print(y) #foreachreturns'None'

16

#printthecontentsofforeachExample.txt

17

with open("./foreachExample.txt", "r")asforeachExample:

18

print (foreachExample.read())

19

20

[1, 2, 3]

21

None

22

3

23

1

24

2

foreachPartition

01

#foreachPartition

02

from __future__ import print_function

03

x = sc.parallelize([1,2,3],5)

04

def f(parition):

05

'''sideeffect:

appendthecurrentRDDpartitioncontentstoafile'''

06

f1=open("./foreachPartitionExample.txt", 'a+')

07

print([el for el in parition],file=f1)

08

09

#firstclearthefilecontents

10

open('./foreachPartitionExample.txt', 'w').close()

11

12

y = x.foreachPartition(f) #writesintoforeachExample.txt

13

14

print(x.glom().collect())

15

print(y) #foreachreturns'None'

16

#printthecontentsofforeachExample.txt

17

with open("./foreachPartitionExample.txt", "r")asforeachExample:

18

print (foreachExample.read())

19

20

[[],[1],[],[2],[3]]

21

None

22

[]

23

[]

24

[1]

25

[2]

26

[3]

collect

1

#collect

2

x = sc.parallelize([1,2,3])

3

y = x.collect()

4

print(x) #distributed

5

print(y) #notdistributed

6

7

ParallelCollectionRDD[87]atparallelizeat

382

8

[1, 2, 3]

reduce

1

#reduce

2

x = sc.parallelize([1,2,3])

3

y = x.reduce(lambda obj,accumulated:

obj +accumulated) #computesacumulativesum

4

print(x.collect())

5

print(y)

6

7

[1, 2, 3]

8

6

fold

1

#fold

2

x = sc.parallelize([1,2,3])

3

neutral_zero_value = 0 #0forsum,1formultiplication

4

y = x.fold(neutral_zero_value,lambda obj,accumulated:

accumulated +obj) #computescumulativesum

5

print(x.collect())

6

print(y)

7

8

[1, 2, 3]

9

6

aggregate

01

#aggregate

02

x = sc.parallelize([2,3,4])

03

neutral_zero_value = (0,1) #sum:

x+0=x,product:

1*x=x

04

seqOp = (lambda aggregated,el:

(aggregated[0] + el,aggregated[1] *el))

05

combOp = (lambda aggregated,el:

(aggregated[0] + el[0],aggregated[1] *el[1]))

06

y =x.aggregate(neutral_zero_value,seqOp,combOp) #computes(cumulativesum,cumulativeproduct)

07

print(x.collect())

08

print(y)

09

10

[2, 3, 4]

11

(9, 24)

max

1

#max

2

x = sc.parallelize([1,3,2])

3

y = x.max()

4

print(x.collect())

5

print(y)

6

7

[1, 3, 2]

8

3

min

1

#min

2

x = sc.parallelize([1,3,2])

3

y = x.min()

4

print(x.collect())

5

print(y)

6

7

[1, 3, 2]

8

1

sum

1

#sum

2

x = sc.parallelize([1,3,2])

3

y = x.sum()

4

print(x.collect())

5

print(y)

6

7

[1, 3, 2]

8

6

count

查看源代码

打印帮助

1

#count

2

x = sc.parallelize([1,3,2])

3

y = x.count()

4

print(x.collect())

5

print(y)

6

7

[1, 3, 2]

8

3

histogram

01

#histogram(example#1)

02

x = sc.parallelize([1,3,1,2,3])

03

y = x.histogram(buckets = 2)

04

print(x.collect())

05

print(y)

06

07

[1, 3, 1, 2, 3]

08

([1, 2, 3],[2, 3])

09

10

#histogram(example#2)

11

x = sc.parallelize([1,3,1,2,3])

12

y = x.histogram([0,0.5,1,1.5,2,2.5,3,3.5])

13

print(x.collect())

14

print(y)

15

16

[1, 3, 1, 2,

- 配套讲稿:

如PPT文件的首页显示word图标,表示该PPT已包含配套word讲稿。双击word图标可打开word文档。

- 特殊限制:

部分文档作品中含有的国旗、国徽等图片,仅作为作品整体效果示例展示,禁止商用。设计者仅对作品中独创性部分享有著作权。

- 关 键 词:

- Spark Python API函数学习 API 函数 学习

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

广东省普通高中学业水平考试数学科考试大纲Word文档下载推荐.docx

广东省普通高中学业水平考试数学科考试大纲Word文档下载推荐.docx